On May 13 2024, OpenAI released their newest multimodal model, GPT-4o. Based on an end-to-end multimodal architecture, this new model, dubbed “omni” is able to seamlessly handle text, visual and audio input in a single neural network.

While the OpenAI Demos showed GPT-4o being used with OpenAI native apps, users looking to use the multimodal capabilities via the API will be met with this OpenAI statement:

GPT-4o in the API supports understanding video (without audio) via vision capabilities. Specifically, videos need to be converted to frames (2-4 frames per second, either sampled uniformly or via a keyframe selection algorithm) to input into the model

And while OpenAI presents uniform sampling and keyframe selection as sampling strategies, I’d like to introduce a semantic sampling strategy, which uses Vision Transformers to infer the semantic meaning of video frames, and create chunks out of semantically-similar parts of the video.

Semantic Router to the rescue

In previous blog posts, I highlighted Semantic Router’s capabilities for semantically chunking text data. We achieve this by embedding multimodal data, ordering it in a sequential manner (consecutive image frames representing a video, sentences representing a paragraph), and using the semantic similarity of these sequential “documents” to form chunks.

These chunks can be formed by either grouping (similar sentences form a paragraph), or splitting (a large single string representing an article split into constituent paragraphs).

Expanding Semantic Chunking to multimodal data

Today let’s explore multimodal semantic chunking, and create a new sampling strategy for use with OpenAI’s GPT-4o model.

The toy example is simple: take a video, and ask GPT-4o to tell us what’s happening throughout the video. For this example, we will use a video from the public domain, namely “We are going on a Bull Run”, by Garage419.

The code

Let’s start by pulling down our video from Google’s Public Archive, and breaking it up into frames:

import cv2

from PIL import Image

vidcap = cv2.VideoCapture(

"http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/WeAreGoingOnBullrun.mp4"

)

frames = []

success, image = vidcap.read()

while success:

frames.append(image)

success, image = vidcap.read()

image_frames = list(map(Image.fromarray, frames))

We’ll go ahead and create embeddings out of our video frames by using Semantic Router’s VitEncoder, and then chunk these frames using Semantic Router’s ConsecutiveSimSplitter. We’re going to skip over the concept of Encoders & Splitters in this post, but if you’d like to learn more, please read these past posts on Semantic Encoders and Semantic Splitters.

from semantic_router.encoders import VitEncoder

from semantic_router.splitters.consecutive_sim import ConsecutiveSimSplitter

encoder = VitEncoder(device="mps")

splitter = ConsecutiveSimSplitter(encoder=encoder, score_threshold=0.5)

splits = splitter(docs=image_frames)

We now have a collection of splits. As you can see below, each split contains at least 1 image. The images inside the splits are ordered sequentially, and all images within a split can be considered semantically similar.

[

DocumentSplit(

docs=[

<PIL.Image.Image image mode=RGB size=1280x720 at 0x109555FD0>

],

is_triggered=True,

triggered_score=0.42090556453933675,

token_count=None,

metadata=None

),

DocumentSplit(

docs=[

<PIL.Image.Image image mode=RGB size=1280x720 at 0x17F823CD0>,

...,

<PIL.Image.Image image mode=RGB size=1280x720 at 0x370CA0B80>

],

is_triggered=True,

triggered_score=0.15758341672287035,

token_count=None,

metadata=None

),

...

]

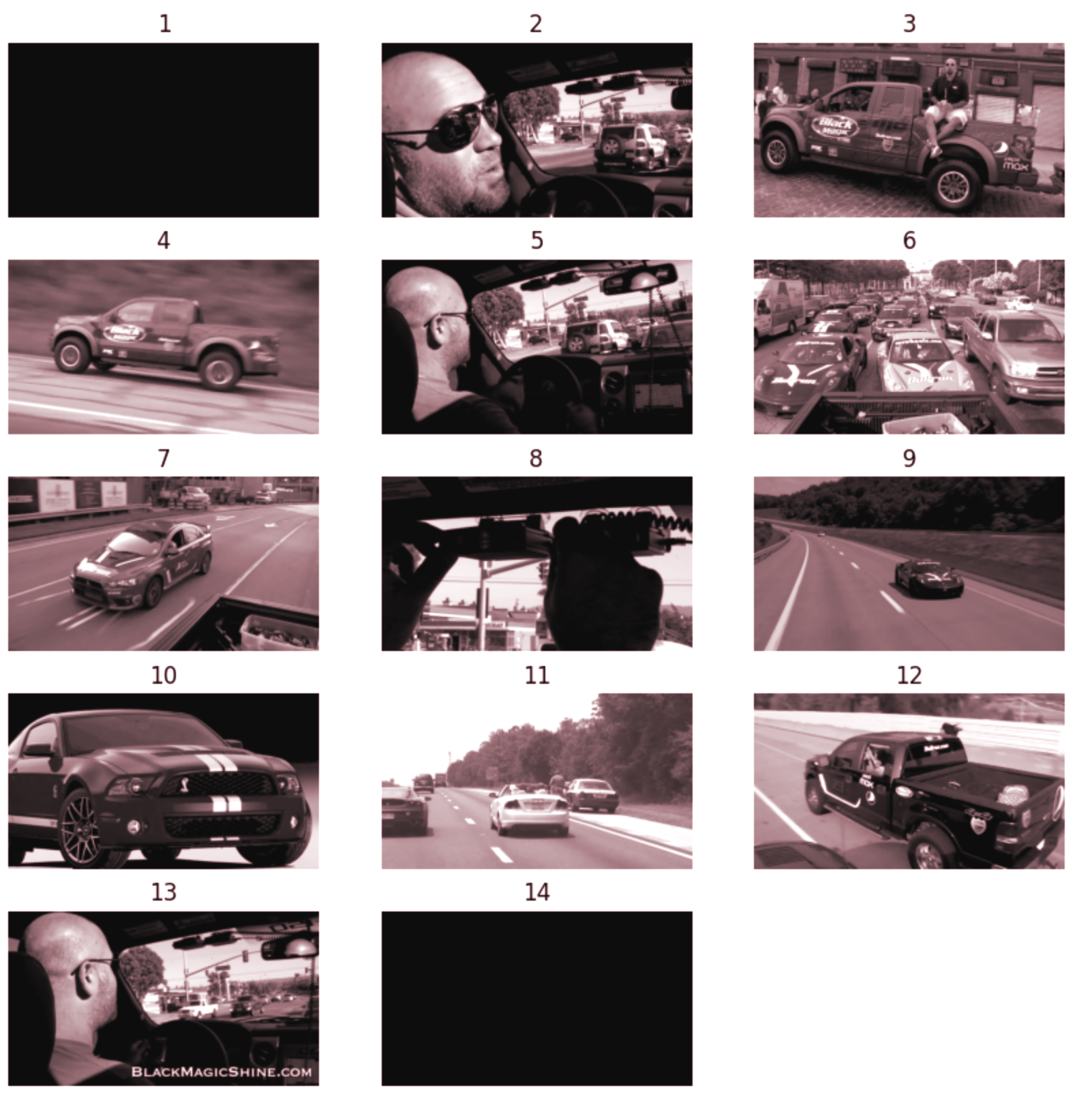

We can represent these splits visually by grabbing the middle frame from each of the splits:

“Hey GPT-4o, what do you see?”

This video is quite short, barely 47s. However, we can visually observe that the video involves the narrator introducing a scene, action shots of different cars, and a police encounter. The video ends with the narrator and a black screen.

We also reduced the video from 1139 frames to just 14. This will help us save API costs while maintaining accuracy.

Let’s go ahead and use OpenAI’s Python library to ask GPT-4o to tell us what’s happening in the video. To do this, we’ll have to first base64-encode the frames from our semantic splits

b64_img_messages = []

for split in splits:

# Get the middle frame from each split

middle_frame = split.docs[len(split.docs) // 2]

# Get image bytes

frame_bytes = io.BytesIO()

middle_frame.save(frame_bytes, format="JPEG")

# Base64-encode the image bytes

b64_img = base64.b64encode(frame_bytes.getvalue()).decode("utf-8")

b64_img_messages.append(

{

"type": "image_url",

"image_url": {

"url": f"data:image/jpeg;base64,{b64_img}"

}

}

)

Now that all our visual data has been prepared and base64-encoded, let’s call OpenAI’s API:

import openai

client = openai.Client()

response = client.chat.completions.create(

model="gpt-4o",

messages=[

{

"role": "user",

"content": [

{

"type": "text",

"text": "The following series of images are sampled frames from a video, in chronological order. What's happening in the video?"

},

*image_contents,

]

}],

stream=False,

)

print(response.choices[0].message.content)

The video follows a participant's experience in a car rally or road race event. Here is a breakdown of the images and the probable sequence of events:

1. **First Image**: The screen is black, possibly indicating the start of the video before any action begins.

2. **Second Image**: A person is shown inside a vehicle, implying they are about to start a journey or race. The scene outside the car shows traffic, and the driver appears to be explaining something.

3. **Third Image**: The same or another participant is shown with a branded truck, likely talking about the vehicle or thanking sponsors. The branding and presence of multiple people suggest a gathering, possibly before the start of the event.

4. **Fourth Image**: The truck is captured in motion, indicating the race or rally has begun.

5. **Fifth Image**: The driver is once again shown inside the vehicle, possibly adjusting a device mounted on the windshield. This could be a GPS, camera, or some related equipment for the race.

6. **Sixth Image**: A busy street filled with multiple cars, all likely participants of the rally or car event.

7. **Seventh Image**: Another participating car, sporting event-related decals, is shown driving, emphasizing the competitive aspect of the event.

8. **Eighth Image**: Close-up of the driver setting up or adjusting equipment inside the vehicle, possibly a monitoring device or a radar detector.

9. **Ninth Image**: A high-speed shot of a sleek sports car involved in the rally, emphasizing the dynamic pace of the event.

10. **Tenth Image**: A standalone sports car is shown, representing the kind of vehicles participating in the event.

11. **Eleventh Image**: A traffic stop involving a police vehicle and some participants, likely highlighting the challenges faced during the rally.

12. **Twelfth Image**: Another team in a different branded truck, seen interacting during the rally, showcasing different participants and their setups.

13. **Thirteenth Image**: The driver again inside the vehicle with the video URL showing, likely marking the end of the main content and leading to a call-to-action for viewers to visit the website.

14. **Fourteenth Image**: The screen goes black again, indicating the conclusion of the video.

The entire sequence portrays a narrative of preparation, participation, and the experience of being part of a road race or rally event, highlighting the vehicles, participants, and occasional hurdles encountered during the journey.

That call cost us around $0.045, and while it did use around 8,000 context tokens, we managed to abide by OpenAI’s 2-4 frames per second requirement for GPT-4o, while not losing the semantic meaning of the video.

Semantic Router is open source, we welcome contributions

Semantic Router is an open source library that allows developers to deterministically steer LLMs, create semantic chunks and more. If you enjoyed this blog post, please star us on Github - aurelio-labs/semantic-router.